Integrating LLMs into Web Apps: A Practical Guide to OpenAI API

The honeymoon phase of AI is over. The days when you could slap together a web app that simply forwards a user's text prompt directly to ChatGPT and call it a "startup" are long gone. Today, clients expect AI to understand their specific proprietary data, take real actions on their behalf, and integrate securely into existing enterprise workflows. Here is how you actually build that.

What is the biggest mistake developers make with OpenAI?

A shocking number of junior developers building AI apps make a catastrophic security error: they call the OpenAI API directly from their frontend React or Vue codebase. Because frontend code is shipped to the end-user's browser, anyone can open Chrome DevTools, navigate to the Network tab, extract your sk-xxxx OpenAI API key, and rack up a massive bill on your credit card overnight.

The Fix: You must build a secure backend proxy layer. Your React app calls your own Node.js server endpoint, and your server securely makes the API call to OpenAI using environment variables that never touch the browser. This is non-negotiable for any production application.

How do I get the AI to answer questions about my company's internal data?

If you've ever tried to paste a 50-page employee handbook into ChatGPT, you know the context window limits quickly become frustrating. Furthermore, "fine-tuning" a model on your data is incredibly expensive, difficult to maintain, and notoriously painful to update when your company's facts and policies change.

The industry standard architecture is called Retrieval-Augmented Generation (RAG). Here is how it works in plain English:

- You convert your company PDFs and documents into mathematical vectors (embeddings) using OpenAI's embedding models.

- You store those vectors in a specialized Vector Database (like Pinecone or pgvector).

- When a user asks a question, your app searches the database for the 3 most relevant paragraphs using cosine similarity.

- You send only those 3 specific paragraphs to OpenAI alongside the user's question, instructing it to answer based solely on that provided context.

This approach eliminates hallucinations because you are forcing the AI into an open-book test rather than letting it guess from its unpredictable pre-trained memory.

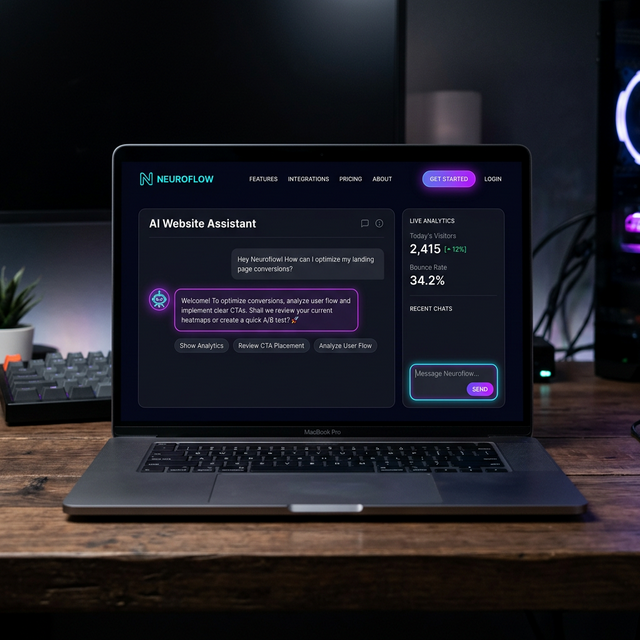

Can LLMs actually perform actions on my website?

Yes. This is the transition from "Chatbots" to "AI Agents." Using OpenAI's Function Calling capability, you define specific JSON schemas representing actions your backend can take (e.g., cancel_subscription(user_id) or book_appointment(date)).

Instead of just replying with text, the LLM analyzes the user's intent and returns a structured JSON object instructing your server to execute that specific function. Your backend validates the request, performs the action, and returns the result to the conversation. This pattern is powering sophisticated customer service agents across the industry.

The Bottom Line

By mastering the proxy architecture, RAG pipelines, and function calling, you transition from building simple novelties to engineering robust, highly defensible AI integrations that actually solve real business problems and generate measurable ROI.